Artificial intelligence is rapidly transforming how the web is crawled and indexed. A growing number of automated bots now scan websites to power AI search engines, train large language models, and build smarter digital assistants. In this guide, we explore the Top AI User Agents and crawlers in 2026, helping website owners and SEO professionals understand which bots access their websites and how they can manage them effectively.

In the past, most website owners only needed to think about traditional search crawlers such as Googlebot or Bingbot. Today, a growing number of AI user agents and crawlers scan websites to train models, power AI search engines, and build knowledge systems.

For website owners, developers, and SEO professionals, understanding these bots is now essential. Knowing which AI user agents and crawlers access your website helps you control indexing, protect content, and make informed decisions about allowing or blocking them through robots.txt.

What Are AI User Agents and Crawlers?

A user agent is a string sent by a bot, browser, or crawler to identify itself when requesting content from a website. Crawlers use these user agents to inform servers about their purpose.

Traditional search engines use crawlers to index pages for search results. AI systems now use similar crawlers to gather data for training large language models, powering AI assistants, and improving search experiences.

These crawlers often follow standard web conventions and respect rules defined in the robots.txt file. Website owners can use these rules to control whether bots are allowed or disallowed from crawling specific sections of a site.

For example, a simple robots.txt directive might look like this:

User-agent: GPTBot

Disallow: /

This rule blocks the GPTBot crawler from accessing the entire site.

As AI continues to reshape the web, keeping track of AI user agents lists becomes increasingly important.

Why Website Owners Should Care About AI Crawlers

AI crawlers can have both positive and negative effects on your website.

Potential benefits

- Your content may be used to improve AI search engines

- AI-powered search assistants may recommend your site

- Increased visibility through AI-generated responses

Potential concerns

- AI models may use your content without sending traffic

- Increased server load from automated crawling

- Intellectual property concerns for publishers

Because of these reasons, many publishers are now reviewing their robots.txt user agents lists to decide which bots should be allowed.

Understanding the top AI user agents and crawlers helps you make informed decisions.

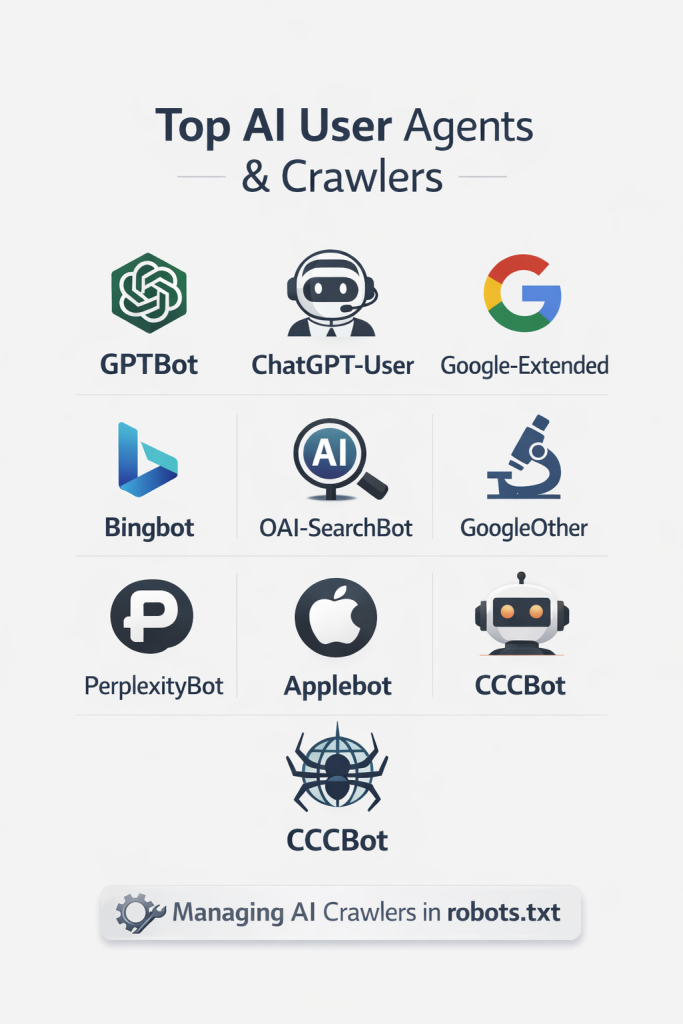

Table of Top AI User Agents and Crawlers

Below is a quick overview of the most important AI search crawlers and user agents currently active across the web.

| AI Bot | Company | Purpose | Robots.txt Support |

|---|---|---|---|

| GPTBot | OpenAI | Collects data for AI models like ChatGPT | Yes |

| ChatGPT-User | OpenAI | Fetches pages when users request browsing | Yes |

| Google-Extended | Controls AI training access for Google models | Yes | |

| GoogleOther | Experimental crawling for research | Yes | |

| Bingbot | Microsoft | Web indexing for Bing and AI features | Yes |

| OAI-SearchBot | OpenAI | Powers AI search experiences | Yes |

| PerplexityBot | Perplexity AI | Crawls pages for AI answers | Yes |

| ClaudeBot | Anthropic | Training data for Claude AI models | Yes |

| Applebot | Apple | Indexing for Apple services and AI | Yes |

| CCBot | Common Crawl | Public web dataset used for AI training | Yes |

Now, let us explore each AI user agent and crawler in more detail.

Top AI User Agents and Crawlers Explained

GPTBot

GPTBot is one of the most widely discussed AI crawlers today. It is operated by OpenAI and is designed to gather publicly available data that helps improve AI models.

OpenAI introduced GPTBot to give website owners transparency and control over whether their content can be used for AI training.

If allowed, GPTBot may crawl pages to improve language models used in AI systems.

Example user agent:

User-agent: GPTBot

Example robots.txt rule to block it:

User-agent: GPTBot

Disallow: /

Many publishers choose to review this crawler carefully because it is directly connected to AI model training.

ChatGPT-User

ChatGPT-User is different from GPTBot. Instead of collecting training data, this crawler is used when ChatGPT fetches content on behalf of a user request.

For example, if a user asks ChatGPT to retrieve or summarize a page, this crawler may access the page.

Example user agent:

User-agent: ChatGPT-User

Example robots.txt rule:

User-agent: ChatGPT-User

Allow: /

This bot behaves more like a normal browser request initiated by an AI assistant.

OAI-SearchBot

OAI-SearchBot supports OpenAI search-related features. It helps AI systems discover and retrieve information from websites, providing more accurate answers.

This crawler plays a role similar to traditional search crawlers, but focuses on AI-driven search results.

Example user agent:

User-agent: OAI-SearchBot

Robots.txt rule example:

User-agent: OAI-SearchBot

Disallow: /private/

Website owners can selectively allow or block sections depending on their preferences.

Google-Extended

Google introduced Google-Extended to give publishers control over whether their content can be used for training Google AI models.

This user agent is separate from Googlebot, which continues to index websites for Google Search.

Example user agent:

User-agent: Google-Extended

Example rule:

User-agent: Google-Extended

Disallow: /

Blocking this bot does not affect normal search indexing but prevents the use of content for certain AI systems.

GoogleOther

GoogleOther is a crawler used by Google for experimental purposes and research. It may crawl pages to test new features, including AI driven technologies.

Example user agent:

User-agent: GoogleOther

Example robots.txt rule:

User-agent: GoogleOther

Allow: /

This bot usually respects robots.txt rules just like other Google crawlers.

Bingbot

Bingbot is Microsoft’s primary crawler used to index websites for Bing. However, it also supports AI experiences such as Microsoft Copilot.

Although it is a traditional search crawler, it is increasingly involved in AI search results.

Example user agent:

User-agent: bingbot

Example robots.txt rule:

User-agent: bingbot

Disallow: /private/

Because Bing powers many services, including AI-powered search assistants, allowing Bingbot can increase visibility across multiple platforms.

PerplexityBot

PerplexityBot is part of the Perplexity AI search engine. The crawler gathers data to provide direct answers through its AI-powered interface.

Perplexity has quickly gained popularity as an alternative AI search platform.

Example user agent:

User-agent: PerplexityBot

Example robots.txt rule:

User-agent: PerplexityBot

Disallow: /

Some publishers choose to restrict this bot depending on how they want their content used.

ClaudeBot

ClaudeBot is operated by Anthropic and is used to collect data for training and improving the Claude AI models.

Anthropic has introduced this crawler to be transparent about how its models collect publicly available web data.

Example user agent:

User-agent: ClaudeBot

Example robots.txt rule:

User-agent: ClaudeBot

Disallow: /

If blocked, Anthropic’s systems should avoid using content from the site for training.

Applebot

Applebot is used by Apple services, including Siri, Spotlight search, and other Apple ecosystem features.

While it began as a traditional search crawler, Apple is increasingly integrating AI capabilities into its services.

Example user agent:

User-agent: Applebot

Example robots.txt rule:

User-agent: Applebot

Allow: /

Allowing Applebot ensures that your content can appear in Apple-powered search experiences.

CCBot

CCBot is the crawler used by Common Crawl, a non-profit organization that maintains a massive open dataset of web pages.

This dataset is frequently used by AI researchers and companies to train machine learning models.

Example user agent:

User-agent: CCBot

Example robots.txt rule:

User-agent: CCBot

Disallow: /

Blocking this crawler prevents your content from being included in future Common Crawl datasets.

How to Manage AI User Agents in robots.txt

Managing AI user agents in robots.txt is straightforward once you know which bots you want to control.

The robots.txt file sits at the root of your website and provides instructions to crawlers.

Example structure:

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Googlebot

Allow: /

In this example:

- GPTBot is blocked

- ClaudeBot is blocked

- Googlebot is allowed

This allows normal search indexing while restricting AI training crawlers.

Many publishers are now reviewing their user agents lists in robots.txt to decide which bots should have access.

How AI Crawlers Are Changing SEO

AI-powered search engines are fundamentally changing how websites gain visibility.

Instead of only relying on traditional search results, users now receive direct answers generated by AI systems. These answers may include references to sources or summaries from multiple websites.

This means websites may gain exposure even if users never click through.

For SEO professionals, the rise of AI search crawlers introduces new considerations.

Content quality matters more

AI systems prioritize authoritative and well-structured information.

Structured data becomes valuable

Clear formatting helps AI systems better understand content.

Brand visibility is important

Even if traffic decreases, brand mentions in AI answers can still bring recognition.

As AI search continues to evolve, understanding top AI user agents and crawlers becomes part of a modern SEO strategy.

Frequently Asked Questions

AI user agents are identifiers used by bots that crawl websites for artificial intelligence-related purposes, such as training models or powering AI search engines.

They function similarly to traditional search engine crawlers but focus on collecting data for AI systems.

You can block them using the robots.txt file.

Example:User-agent: GPTBot

Disallow: /

This rule prevents GPTBot from crawling your website.

Not necessarily. Some AI crawlers can increase visibility in AI-powered search experiences. However, some publishers prefer blocking them if they do not want their content used for AI training.

Most major companies, such as OpenAI, Google, Microsoft, and Anthropic, publicly state that their crawlers respect robots.txt rules. However, malicious bots may ignore these rules.

It depends on your goals. If you want visibility in AI search tools, allowing them can help. If protecting your content is more important, you may choose to block them.

You can use the SEO Action Plan Chrome extension, which is the best extension for analysing robots.txt user agents. Install the extension and open it, then, from the Settings gear at the top, select the Crawler User Agent from the list of 30+ user agents. The analysis will then show whether the page is allowed by the selected user-agent or not.

Final Thoughts

The web is entering a new phase where AI user agents and crawlers play an important role in how information is discovered and used.

For website owners and SEO professionals, understanding the top AI user agents and crawlers in 2026 is no longer optional. These bots influence how content is indexed, analyzed, and presented in AI-driven search experiences.

By maintaining an updated user agents list, monitoring crawl behavior, and configuring your robots.txt properly, you can stay in control of how your content interacts with AI systems.

The landscape will continue evolving as new AI platforms emerge. Keeping track of these crawlers ensures your website remains optimized, protected, and prepared for the future of search.